iCub Is Growing Up – IEEE Spectrum

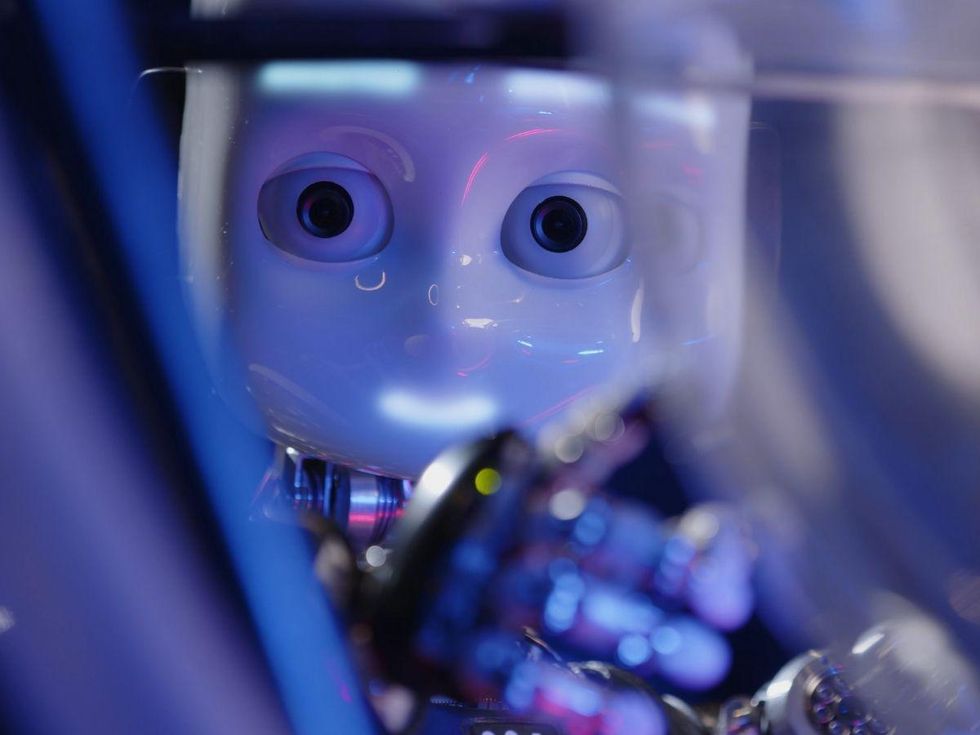

The ability to make choices autonomously is not just what would make robots useful, it can be what can make robots

robots. We price robots for their capacity to sense what’s heading on all-around them, make choices dependent on that facts, and then choose valuable actions with out our enter. In the earlier, robotic choice building followed extremely structured rules—if you sense this, then do that. In structured environments like factories, this performs properly sufficient. But in chaotic, unfamiliar, or poorly defined settings, reliance on principles would make robots notoriously lousy at dealing with just about anything that could not be specifically predicted and planned for in progress.

RoMan, along with a lot of other robots such as house vacuums, drones, and autonomous autos, handles the problems of semistructured environments by means of artificial neural networks—a computing method that loosely mimics the construction of neurons in biological brains. About a decade in the past, synthetic neural networks started to be utilized to a vast variety of semistructured facts that experienced earlier been very tough for computer systems working procedures-primarily based programming (normally referred to as symbolic reasoning) to interpret. Relatively than recognizing certain info structures, an synthetic neural community is in a position to figure out facts patterns, determining novel details that are similar (but not similar) to facts that the network has encountered ahead of. In truth, part of the attractiveness of synthetic neural networks is that they are educated by instance, by letting the community ingest annotated details and master its individual method of sample recognition. For neural networks with several levels of abstraction, this technique is termed deep mastering.

Even nevertheless people are usually associated in the schooling procedure, and even even though synthetic neural networks were being influenced by the neural networks in human brains, the variety of pattern recognition a deep mastering method does is essentially various from the way individuals see the globe. It truly is frequently almost unattainable to fully grasp the marriage between the knowledge input into the program and the interpretation of the knowledge that the method outputs. And that difference—the “black box” opacity of deep learning—poses a probable dilemma for robots like RoMan and for the Military Investigate Lab.

In chaotic, unfamiliar, or poorly defined options, reliance on principles tends to make robots notoriously bad at working with just about anything that could not be exactly predicted and prepared for in advance.

This opacity implies that robots that count on deep studying have to be utilised cautiously. A deep-understanding system is superior at recognizing designs, but lacks the earth comprehending that a human typically uses to make selections, which is why these kinds of devices do best when their apps are perfectly defined and narrow in scope. “When you have properly-structured inputs and outputs, and you can encapsulate your trouble in that variety of connection, I feel deep mastering does incredibly nicely,” claims

Tom Howard, who directs the University of Rochester’s Robotics and Synthetic Intelligence Laboratory and has developed natural-language interaction algorithms for RoMan and other ground robots. “The issue when programming an smart robot is, at what realistic sizing do individuals deep-mastering setting up blocks exist?” Howard describes that when you apply deep understanding to higher-degree issues, the amount of achievable inputs turns into really large, and solving issues at that scale can be complicated. And the prospective effects of sudden or unexplainable conduct are a lot a lot more significant when that habits is manifested by a 170-kilogram two-armed armed service robot.

Just after a pair of minutes, RoMan hasn’t moved—it’s still sitting down there, pondering the tree branch, arms poised like a praying mantis. For the past 10 decades, the Military Analysis Lab’s Robotics Collaborative Know-how Alliance (RCTA) has been doing the job with roboticists from Carnegie Mellon University, Florida State College, Basic Dynamics Land Techniques, JPL, MIT, QinetiQ North America, College of Central Florida, the College of Pennsylvania, and other best investigation institutions to build robotic autonomy for use in long run floor-combat motor vehicles. RoMan is just one part of that system.

The “go clear a route” endeavor that RoMan is bit by bit pondering as a result of is tough for a robot since the activity is so abstract. RoMan requirements to detect objects that may well be blocking the route, rationale about the physical houses of those people objects, determine out how to grasp them and what form of manipulation system may possibly be best to implement (like pushing, pulling, or lifting), and then make it materialize. That’s a whole lot of measures and a good deal of unknowns for a robotic with a constrained understanding of the earth.

This restricted knowledge is in which the ARL robots start out to differ from other robots that rely on deep mastering, says Ethan Stump, main scientist of the AI for Maneuver and Mobility plan at ARL. “The Military can be known as on to run basically anyplace in the environment. We do not have a system for gathering info in all the various domains in which we may possibly be running. We may perhaps be deployed to some unknown forest on the other side of the environment, but we are going to be envisioned to perform just as perfectly as we would in our very own yard,” he says. Most deep-learning devices operate reliably only in just the domains and environments in which they have been skilled. Even if the area is one thing like “each individual drivable highway in San Francisco,” the robot will do high-quality, since that’s a information established that has now been collected. But, Stump suggests, that’s not an choice for the military services. If an Military deep-understanding method won’t conduct effectively, they won’t be able to basically fix the difficulty by amassing additional info.

ARL’s robots also have to have to have a broad consciousness of what they are accomplishing. “In a normal functions buy for a mission, you have objectives, constraints, a paragraph on the commander’s intent—basically a narrative of the reason of the mission—which presents contextual info that humans can interpret and gives them the composition for when they need to make choices and when they have to have to improvise,” Stump clarifies. In other words and phrases, RoMan may have to have to obvious a path promptly, or it could need to have to clear a route quietly, based on the mission’s broader targets. That is a big check with for even the most innovative robot. “I can not imagine of a deep-discovering method that can offer with this sort of information,” Stump states.

Even though I enjoy, RoMan is reset for a second check out at department elimination. ARL’s solution to autonomy is modular, where by deep studying is combined with other tactics, and the robot is serving to ARL determine out which tasks are suitable for which approaches. At the moment, RoMan is tests two various approaches of identifying objects from 3D sensor facts: UPenn’s technique is deep-finding out-centered, although Carnegie Mellon is making use of a strategy termed notion as a result of search, which relies on a extra classic database of 3D versions. Notion by look for works only if you know precisely which objects you happen to be looking for in advance, but coaching is significantly speedier considering that you want only a solitary design for every item. It can also be additional precise when perception of the item is difficult—if the item is partly concealed or upside-down, for example. ARL is tests these methods to identify which is the most versatile and productive, permitting them operate at the same time and compete in opposition to every single other.

Notion is one of the factors that deep understanding tends to excel at. “The laptop eyesight community has manufactured crazy progress working with deep mastering for this stuff,” says Maggie Wigness, a pc scientist at ARL. “We’ve experienced good good results with some of these models that have been experienced in a single surroundings generalizing to a new environment, and we intend to continue to keep applying deep studying for these sorts of duties, for the reason that it is the state of the art.”

ARL’s modular method could merge numerous techniques in methods that leverage their specific strengths. For illustration, a notion method that uses deep-mastering-based vision to classify terrain could function alongside an autonomous driving process dependent on an solution called inverse reinforcement discovering, where the product can quickly be developed or refined by observations from human soldiers. Regular reinforcement discovering optimizes a answer dependent on proven reward capabilities, and is often applied when you’re not essentially guaranteed what optimal actions looks like. This is fewer of a problem for the Army, which can frequently think that well-experienced humans will be close by to exhibit a robotic the ideal way to do issues. “When we deploy these robots, issues can improve incredibly swiftly,” Wigness suggests. “So we needed a procedure wherever we could have a soldier intervene, and with just a handful of examples from a user in the industry, we can update the system if we want a new conduct.” A deep-finding out technique would have to have “a whole lot more info and time,” she suggests.

It is not just knowledge-sparse challenges and speedy adaptation that deep discovering struggles with. There are also thoughts of robustness, explainability, and protection. “These thoughts aren’t distinctive to the armed forces,” says Stump, “but it truly is specifically significant when we are conversing about devices that might include lethality.” To be apparent, ARL is not currently functioning on deadly autonomous weapons units, but the lab is helping to lay the groundwork for autonomous techniques in the U.S. military much more broadly, which implies thinking of approaches in which this kind of devices may be utilised in the potential.

The necessities of a deep community are to a huge extent misaligned with the demands of an Military mission, and which is a problem.

Protection is an apparent precedence, and nevertheless there is just not a very clear way of producing a deep-discovering procedure verifiably risk-free, according to Stump. “Carrying out deep discovering with protection constraints is a big investigation effort and hard work. It is hard to increase those people constraints into the technique, because you really don’t know wherever the constraints now in the technique arrived from. So when the mission modifications, or the context improvements, it can be difficult to offer with that. It is not even a information concern it truly is an architecture problem.” ARL’s modular architecture, whether or not it is a notion module that makes use of deep studying or an autonomous driving module that takes advantage of inverse reinforcement learning or one thing else, can type parts of a broader autonomous method that incorporates the sorts of safety and adaptability that the armed service involves. Other modules in the procedure can work at a higher level, employing diverse techniques that are a lot more verifiable or explainable and that can step in to shield the over-all method from adverse unpredictable behaviors. “If other information will come in and variations what we require to do, there is a hierarchy there,” Stump says. “It all transpires in a rational way.”

Nicholas Roy, who potential customers the Robust Robotics Group at MIT and describes himself as “considerably of a rabble-rouser” because of to his skepticism of some of the statements created about the electricity of deep discovering, agrees with the ARL roboticists that deep-finding out techniques usually cannot deal with the kinds of issues that the Army has to be geared up for. “The Military is generally coming into new environments, and the adversary is always likely to be trying to alter the atmosphere so that the teaching procedure the robots went by merely will not match what they are seeing,” Roy states. “So the demands of a deep network are to a huge extent misaligned with the needs of an Military mission, and that is a difficulty.”

Roy, who has worked on summary reasoning for ground robots as aspect of the RCTA, emphasizes that deep learning is a handy technology when utilized to issues with obvious functional interactions, but when you start off wanting at summary concepts, it’s not obvious whether deep discovering is a viable method. “I’m very interested in obtaining how neural networks and deep mastering could be assembled in a way that supports greater-level reasoning,” Roy claims. “I assume it will come down to the idea of combining several lower-amount neural networks to specific larger degree principles, and I do not think that we recognize how to do that yet.” Roy gives the instance of making use of two independent neural networks, a person to detect objects that are cars and the other to detect objects that are pink. It is really harder to mix individuals two networks into 1 larger sized community that detects purple cars and trucks than it would be if you were being making use of a symbolic reasoning method primarily based on structured regulations with reasonable relationships. “Loads of people today are doing work on this, but I haven’t observed a real accomplishment that drives summary reasoning of this kind.”

For the foreseeable potential, ARL is generating absolutely sure that its autonomous systems are harmless and robust by retaining human beings around for both better-stage reasoning and occasional minimal-level suggestions. Humans could not be specifically in the loop at all periods, but the idea is that human beings and robots are a lot more helpful when functioning together as a staff. When the most recent phase of the Robotics Collaborative Technologies Alliance system began in 2009, Stump suggests, “we’d already had lots of yrs of getting in Iraq and Afghanistan, in which robots had been frequently used as resources. We’ve been striving to figure out what we can do to changeover robots from applications to acting additional as teammates within just the squad.”

RoMan receives a tiny bit of help when a human supervisor factors out a location of the department wherever greedy may be most effective. The robot doesn’t have any essential knowledge about what a tree branch basically is, and this lack of world awareness (what we consider of as widespread feeling) is a basic difficulty with autonomous programs of all types. Obtaining a human leverage our huge practical experience into a tiny amount of steering can make RoMan’s occupation a lot less complicated. And without a doubt, this time RoMan manages to successfully grasp the department and noisily haul it throughout the home.

Turning a robot into a great teammate can be tricky, due to the fact it can be challenging to uncover the suitable amount of autonomy. As well small and it would get most or all of the emphasis of one human to regulate a single robot, which may possibly be acceptable in unique scenarios like explosive-ordnance disposal but is or else not effective. Also substantially autonomy and you’d get started to have problems with have faith in, basic safety, and explainability.

“I imagine the degree that we are wanting for right here is for robots to run on the degree of working puppies,” describes Stump. “They understand exactly what we require them to do in minimal situation, they have a little amount of adaptability and creativeness if they are confronted with novel conditions, but we do not assume them to do innovative challenge-fixing. And if they have to have assist, they drop back on us.”

RoMan is not likely to obtain by itself out in the field on a mission at any time before long, even as portion of a staff with humans. It’s quite a lot a research system. But the software program being designed for RoMan and other robots at ARL, referred to as Adaptive Planner Parameter Mastering (APPL), will likely be utilized 1st in autonomous driving, and afterwards in additional sophisticated robotic techniques that could contain mobile manipulators like RoMan. APPL combines various equipment-discovering tactics (which includes inverse reinforcement mastering and deep mastering) arranged hierarchically underneath classical autonomous navigation programs. That makes it possible for substantial-stage objectives and constraints to be applied on prime of lessen-level programming. People can use teleoperated demonstrations, corrective interventions, and evaluative feedback to assist robots adjust to new environments, although the robots can use unsupervised reinforcement understanding to adjust their behavior parameters on the fly. The consequence is an autonomy technique that can enjoy several of the benefits of equipment learning, even though also providing the kind of protection and explainability that the Military requires. With APPL, a finding out-centered technique like RoMan can function in predictable strategies even less than uncertainty, slipping back again on human tuning or human demonstration if it ends up in an surroundings that’s way too various from what it skilled on.

It can be tempting to seem at the rapid development of industrial and industrial autonomous techniques (autonomous autos staying just 1 case in point) and marvel why the Army would seem to be considerably driving the state of the artwork. But as Stump finds himself having to make clear to Military generals, when it will come to autonomous techniques, “there are heaps of tough difficulties, but industry’s hard troubles are different from the Army’s hard complications.” The Army isn’t going to have the luxurious of functioning its robots in structured environments with tons of information, which is why ARL has put so significantly hard work into APPL, and into keeping a position for individuals. Likely ahead, individuals are very likely to keep on being a vital section of the autonomous framework that ARL is creating. “That’s what we are seeking to develop with our robotics methods,” Stump claims. “That is our bumper sticker: ‘From tools to teammates.’ ”

This report appears in the Oct 2021 print concern as “Deep Mastering Goes to Boot Camp.”

From Your Web site Content articles

Linked Articles or blog posts About the Website