In late 1969, C. Peter McColough, chairman of Xerox Corp., told the New York Society of Security Analysts that Xerox was determined to develop “the architecture of information” to solve the problems that had been created by the “knowledge explosion.” Legend has it that McColough then turned to Jack E. Goldman, senior vice president of research and development, and said, “All right, go start a lab that will find out what I just meant.“

This article was first published as “Inside the PARC: the ‘information architects’.” It appeared in the October 1985 issue of IEEE Spectrum. A PDF version is available on IEEE Xplore. The diagrams and photographs appeared in the original print version.

Goldman tells it differently. In 1969 Xerox had just bought Scientific Data Systems (SDS), a mainframe computer manufacturer. “When Xerox bought SDS,” he recalled, “I walked promptly into the office of Peter McColough and said, ‘Look, now that we’re in this digital computer business, we better damned well have a research laboratory!’ “

In any case, the result was the Xerox Palo Alto Research Center (PARC) in California, one of the most unusual corporate research organizations of our time. PARC is one of three research centers within Xerox; the other two are in Webster, N.Y., and Toronto, Ont., Canada. It employs approximately 350 researchers, managers, and support staff (by comparison, Bell Laboratories before the AT&T breakup employed roughly 25,000). PARC, now in its fifteenth year, originated or nurtured technologies that led to these developments, among others:

- The Macintosh computer, with its mouse and overlapping windows.

- Colorful weather maps on TV news programs.

- Laser printers.

- Structured VLSI design, now taught in more than 100 universities.

- Networks that link personal computers in offices.

- Semiconductor lasers that read and write optical disks.

- Structured programming languages like Modula-2 and Ada.

In the mid-1970s, close to half of the top 100 computer scientists in the world were working at PARC, and the laboratory boasted similar strength in other fields, including solid-state physics and optics.

Some researchers say PARC was a product of the 1960s and that decade’s philosophy of power to the people, of improving the quality of life. When the center opened in 1970, it was unlike other major industrial research laboratories; its work wasn’t tied, even loosely, to its corporate parent’s current product lines. And unlike university research laboratories, PARC had one unifying vision: it would develop “the architecture of information.”

The originator of that phrase is unclear. McColough has credited his speech writer. The speech writer later said that neither he nor McColough had a specific definition of the phrase.

So almost everyone who joined PARC in its formative years had a different idea of what the center’s charter was. This had its advantages. Since projects were not assigned from above, the researchers formed their own groups; support for a project depended on how many people its instigator could get to work on it.

“The phrase was ‘Tom Sawyering,’ ” recalled James G. Mitchell, who joined PARC from the defunct Berkeley Computer Corp. in 1971 and is now vice president of research at the Acorn Research Centre in Palo Alto. “Someone would decide that a certain thing was really important to do. They would start working on it, give some structure to it, and then try to convince other people to come whitewash this fence with them.”

First Steps

When Goldman set up PARC, one of his first decisions was to ask George E. Pake, a longtime friend, to run it. Pake was executive vice chancellor, provost, and professor of physics at Washington University in St. Louis, Mo. One of the first decisions Pake in turn made was to hire, among others, Robert Taylor, then at the University of Utah, to help him recruit engineers and scientists for the Computer Science and Systems Science Laboratories.

Taylor had been director of the information-processing techniques office at ARPA (the U.S. military’s Advanced Research Projects Agency), where he and others had funded the heyday of computer research in the mid- and late 1960s.

PARC started with a small nucleus—perhaps fewer than 20 people. Nine came from the Berkeley Computer Corp., a small mainframe computer company that Taylor had tried to convince Xerox to buy as a way of starring up PARC. (Many of the people at BCC were responsible for the design of the SDS 940, the computer on the strength of which Xerox bought Scientific Data Systems in 1968.)

The 20 PARC employees were housed in a small, rented building, “with rented chairs, rented desks, a telephone with four buttons on it, and no receptionist,” recalled David Thornburg, who joined PARC’s General Science Laboratory fresh out of graduate school in 1971. The group thought it should have a computer of its own.

“It’s a little hard to do language research and compiler research without having a machine,” said Mitchell. The computer they wanted was a PDP-10 from Digital Equipment Corp. (DEC).

“There was a rivalry in Datamation [magazine] advertisements between Xerox’s SDS and DEC,” recalled Alan Kay, who came to PARC as a researcher from Stanford University‘s Artificial Intelligence Laboratory in late 1970. “When we wanted a PDP-10, Xerox envisioned a photographer lining up a shot of DEC boxes going into the PARC labs, so they said, ‘How about a Sigma 7?’ ”

“We decided it would take three years to do a good operating system for a Sigma 7, while we could build an entire PDP-10 in just one year.” The result was MAXC (Multiple Access Xerox Computer), which emulated the PDP-10 but used semiconductor dynamic RAMs instead of core. So much care was lavished on MAXC’s hardware and software that it held the all-time record for continuous availability as a node on the ARPAnet.

MAXC was crucial to a number of developments. The Intel Corp., which had made the 1103 dynamic memory chips used in

the MAXC design, reaped one of the first benefits. “Most of the 1103 memory chips you bought from Intel at the time didn’t work,” recalled Kay. So PARC researcher Chuck Thacker built a chip-tester to screen chips for MAXC. A later version of that tester, based on an Alto personal computer, also developed at PARC, ended up being used by Intel itself on its production line.

And MAXC gave PARC experience in building computers that would later stand the center in good stead. “There were three capabilities we needed that we could not get if we bought a PDP-10,” recalled an early PARC lab manager. “We needed to develop a vendor community—local people who would do design layouts, printed-circuit boards, and so forth—and the only way to get that is to drive it with a project. We also needed semiconductor memory, which PDP-10s did not have. And we thought we needed to learn more about microprogrammable machines, although it turned out we didn’t use those features.”

MAXC set a pattern for PARC: building its own hardware. That committed its researchers to visions that must be turned into reality—at least on a small scale.

“One of the blood oaths that was taken by the original founders was that we would never do a system that wasn’t engineered for 100 users,” said Kay. “That meant that if it was a time-sharing system, you had to run 100 people on it; if it was a programming language, 100 people had to program in it without having their hands constantly held. If it was a personal computer, you had to be able to build 100.”

This policy of building working systems is not the only way of doing research; Mitchell recalled that it was a bone of contention at PARC.

“Systems research requires building systems,” he said. “Otherwise you don’t know whether the ideas you have are any good, or how difficult they are to implement. But there are people who think that when you are building things you are not doing research.”

Since MAXC, the center has built prototypes of dozens of hardware and software systems—prototypes that sometimes numbered in the thousands of units.

The first personal computer developed in the United States is commonly thought to be the MITS Altair, which sold as a hobbyist’s kit in 1976. At nearly the same time the Apple I became available, also in kit form.

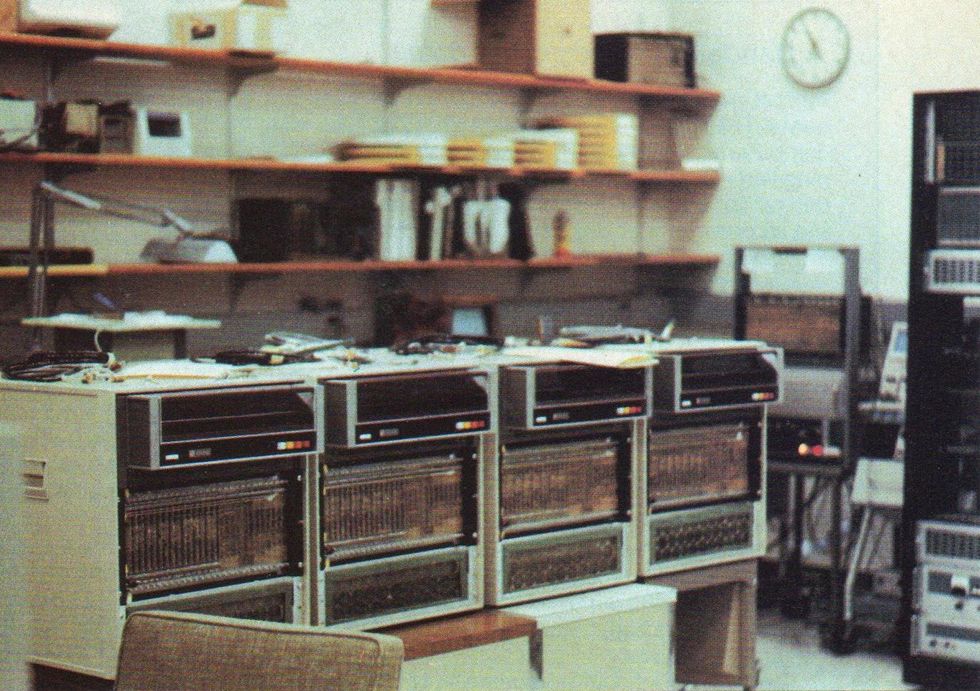

But by the end of that year there were also 200 Alto personal computers in daily use—the first of them having been built in 1973. While researchers in PARC’s Computer Science Laboratory were completing the MAXC and beginning to use it, their counterparts in the Systems Science Laboratory were putting together a distributed computer system using Nova 800 processors and a high-speed character generator.

In September 1972, researchers Butler Lampson and Chuck Thacker of PARC’s Computer Science Laboratory went to Alan Kay in the Systems Science Laboratory and asked, “Do you have any money?”

Kay told them that he had about $250,000 earmarked for more Nova 800s and character-generation hardware.

“How would you like us to build you a computer?” Lampson asked Kay.

“I’d like it a lot,” Kay replied. And on Nov. 22, 1972, Thacker and Ed McCreight began building what was to become the Alto. A Xerox executive reportedly angered Thacker by insisting that it would take 18 months to develop a major hardware system. When Thacker argued that he could do it in three months, a bet was placed.

It took a little longer than three months, but not much. On April 1, 1973, Thornburg recalled, “I walked into the basement where the prototype Alto was sitting, with its umbilical cord attached to a rack full of Novas, and saw Ed McCreight sitting back in a chair with the little words, ‘Alto lives’ in the upper left corner of the display screen.”

Kay said the Alto turned out to be “a vector sum of what Lampson wanted, what Thacker wanted, and what I wanted. Lampson wanted a $500 PDP-10,” he recalled. “Thacker wanted a 10-times-faster Nova 800, and I wanted a machine that you could carry around and children could use.”

The reason the Alto could be built so quickly was its simplicity. The processor, recalled Kay, “was hardly more than a clock”—only 160 chips in 1973’s primitive integrated circuit technology. The architecture goes back to the TX-2, built with 32 program counters at the Massachusetts Institute of Technology’s Lincoln Laboratories in the late 1950s. The Alto, which had 16 program counters, would fetch its next instruction from whichever counter had the highest priority at any given moment. Executing multiple tasks incurred no overhead. While the machine was painting the screen display, the dynamic memory was being refreshed every 2 milliseconds, the keyboard was being monitored, and information was being transferred to and from the disk. The task of lowest priority was running the user’s program.

In 1973 every researcher at PARC wanted an Alto personal computer, bu

t there weren’t enough to go around. To speed things up, researchers dropped into the Alto laboratory whenever they had a few free moments to help with computer assembly.

The prototype was a success, and more Altos were built. Research on user interfaces, computer languages, and graphics began in earnest. Lampson, Thacker, and other instigators of the project got the first models. Many PARC researchers pitched in to speed up the production schedules, but there never seemed to be enough Altos.

“There was a lab where the Altos were getting built, with circuit boards lying around, and anyone could go in and work on them,” recalled Daniel H.H. Ingalls, now a principal engineer at Apple Computer Inc., Cupertino, Calif.

Ron Rider, who is still with Xerox, “had an Alto when Altos were impossible to get,” recalled Bert Sutherland, who joined PARC in 1975 as manager of the Systems Science Laboratory. “When I asked him how he got one, he told me that he went around to the various laboratories, collected parts that people owed him, and put it together himself.”

Networking: The Story of Ethernet

By today’s standards the Alto was not a particularly powerful computer. But if several Altos are linked, along with file servers and printers, the result looks suspiciously like the office of the future.

The idea of a local computer network had been discussed before PARC was founded—in 1966, at Stanford University. Larry Tesler, now manager of object-oriented systems at Apple, who had graduated from Stanford, was still hanging around the campus when the university was considering buying an IBM 360 timesharing system.

“One of the guys and I proposed that instead they buy 100 PDP-1s and link them together in a network,” Tesler said. “Some of the advisors thought that was a great idea; a consultant from Yale, Alan Perlis, told them that was what they ought to do, but the IBM-oriented people at Stanford thought it would be safer to buy the timesharing system. They missed the opportunity to invent local networking.” So PARC ended up with another first. At the same time that the Alto was being built, Thacker conceived of the Ethernet, a coaxial cable that would link machines in the simplest possible fashion. It was based in part on the Alohanet, a packet radio network developed at the University of Hawaii in the late 1960s.

“Thacker made the remark that coaxial cable is nothing but captive ether,” said Kay. “So that part of it was already set before Robert Metcalfe and David Boggs came on board—that it would be packet-switching and that it would be a collision-type network. But then Metcalfe and Boggs sweated for a year to figure out how to do the damn thing.” (Metcalfe later founded 3Com Corp., Mountain View, Calif.; Boggs is now with DEC Western Research in Los Altos, Calif. The two of them hold the basic patents on the Ethernet.)

“I’ve always thought the fact that [David] Boggs was a ham radio operator was important…. [He] knew that you could communicate reliably through an unreliable medium. I’ve often wondered what would have happened if he hadn’t had that background.”

—Bert Sutherland

“I’ve always thought the fact that Boggs was a ham radio operator was important,” Sutherland said. “It had a great impact on the way the Ethernet was designed, because the Ethernet fundamentally doesn’t work reliably. It’s like citizens’ band radio, or any of the other kinds of radio communication, which are fundamentally not reliable in the way that we think of the telephone. Because you know it basically doesn’t work, you do all the defensive programming—the ‘say again, you were garbled’ protocols that were worked out for radio communication. And that makes the resulting network function extremely reliably.”

“Boggs was a ham and knew that you could communicate reliably through an unreliable medium. I’ve often wondered what would have happened if he hadn’t had that background,” Sutherland added.

Once the Ethernet was built, using it was fairly simple: a computer that wanted to send a message would wait and see whether the cable was clear. If it was, the machine would send the information in a packet prefaced with the address of its recipient. If two messages collided, the machines that sent them would each wait for a random interval before trying again.

One innovative use for the network had nothing to do with people sending messages to one another; it involved communication solely between machines. Because the dynamic memory chips were so unreliable in those days, the Alto also ran a memory check when it wasn’t doing anything else. Its response to finding a bad chip was remarkable: “It would send a message telling which Alto was bad, which slot had the bad board, and which row and column had the bad chips,” Thornburg said. “The reason I found out about this was that one day the repairman showed up and said, ‘Any time you’re ready to power down, I need to fix your Alto,’ and I didn’t even know anything was wrong.”

EARS: The Story of the First Laser Printer

While the Ethernet was being developed, so was another crucial element in the office of the future: the laser printer. After all, what use was a screen that could show documents in multiple type styles and a network that could transmit them from place to place without some means of printing them efficiently?

The idea for the laser printer came to PARC from Xerox’s Webster, N.Y., research laboratory—along with its proponent, Gary Starkweather. He had the idea of using a laser to paint information, in digital form, onto the drum or belt of a copying machine, then-research vice president Goldman recalled. Starkweather reported to the vice president of the Business Products Group for Advanced Development, George White.

“George White came to me,” said Goldman, “and said, ‘Look, Jack, I got a terrific guy named Gary Starkweather doing some exciting things on translating visual information to print by a laser, using a Xerox machine, of course. What an ideal concept that would be for Xerox. But I don’t think he’s going to thrive in Rochester; nobody’s going to listen to him, they’re not going to do anything that far advanced. Why don’t you take him out to your new lab in Palo Alto?’ ”

Newly appointed PARC manager Pake jumped at the opportunity. Starkweather and a few other researchers from Rochester were transferred to Palo Alto and started PARC’s Optical Science Laboratory. The first laser printer, EARS (Ethernet-Alto-Research character generator-Scanning laser output terminal), built by Starkweather and Ron Rider, began printing documents that were generated by Altos and sent to it via Ethernet in 1973.

EARS wasn’t perfect, Thornburg said. It had a dynamic character generator that would create new patterns for characters and graphics as they came in. If a page had no uppercase Qs in it, the character generator would economize on internal memory by not generating a pattern for a capital “Q.” But if a page contained a very complex picture, the character generator would run out of space for patterns; “there were certain levels of complexity in drawings that couldn’t be printed,” Thornburg recalled.

Even with these drawbacks, the laser printer was still an enormous advance over the line printers, teletypes, and facsimile printers that were available at the time, and Goldman pushed to have i

t commercialized as quickly as possible. But Xerox resisted. In fact, a sore point throughout PARC’s history has been the parent organization’s seeming inability to exploit the developments that researchers made.

In 1972, when Starkweather built his first prototype, the Lawrence Livermore National Laboratory, in an effort to spur the technology, put out a request for bids for five laser printers. But Goldman was unable to convince the executive to whom Xerox’s Electro-Optical Systems division reported (whose background was accounting and finance) to allow a bid. The reason: Xerox might have lost $150 000 over the life of the contract if the laser printers needed repair as often as the copiers on which they were based, even though initial evidence showed that printing caused far less wear and tear than copying.

In 1974 the laser printer first became available outside PARC when a small group of PARC researchers under John Ellenby—who built the Alto II, a production-line version of the Alto, and who is now vice president of development at Grid Systems Corp., Mountain View, Calif.—began buying used copiers from Xerox’s copier division and installing laser heads in them. The resulting printers, known as Dovers, were distributed within Xerox and to universities. Sutherland estimated that several dozen were built.

“They stripped out all the optics and turned them back to the copier division for credit,” he recalled. Even today, he said, he receives laser-printed documents from universities in which he can recognize the Dover typefaces.

Also in 1974, the Product Review Committee at Xerox headquarters in Rochester, N.Y., was finally coming to a decision about what kind of computer printer the company should manufacture. “A bunch of horse’s asses who don’t know anything about technology were making the decision, and it looked to me, sitting a week before the election, that it was going toward CRT technology,” said Goldman. (Another group at Xerox had developed a printing system whereby text displayed on a special cathode ray tube would be focused on a copier drum and printed.) “It was Monday night. I commandeered a plane,” Goldman recalled. “I took the planning vice president and the marketing vice president by the ear, and I said, ‘You two guys are coming with me. Clear your Tuesday calendars. You are coming with me to PARC tonight. We’ll be back for the 8:30 meeting on Wednesday morning.’ We left around 7:00 p.m., got to California at 1:00, which is only 10:00 their time, and the guys at PARC, bless their souls, did a beautiful presentation showing what the laser printer could do.”

“If you’re dealing with marketing or planning people, make them kick the tires. All the charts and all the slides aren’t worth a damn,” Goldman said.

From a purely economic standpoint, Xerox’s investment in PARC for its first decade was returned with interest by the profits from the laser printer.

The committee opted to go with laser technology, but there were delays. “They wouldn’t let us get them out on 7000s,” Goldman said, referring to the old-model printer that Ellenby’s group had used as a base. “Instead they insisted on going with new 9000 Series, which didn’t come out until 1977.”

From a purely economic standpoint, Xerox’s investment in PARC for its first decade was returned with interest by the profits from the laser printer. This is perhaps ironic, since one vision of the office of the future was that it would be paperless.

“I think PARC has generated more paper than any other office by far, because at the press of a button you can print 30 copies of any report,” observed Douglas Fairbairn, a former PARC technician and now vice president for user-designed technology at VLSI Technology Inc. “If the report is 30 pages long, that’s 1000 pages, but it still takes only a few minutes. Then you say, ‘I guess I wanted that picture on the other page.’ That’s another 1000 pages.”

Fun and Games With Email and Printers

By the mid-1970s the Altos in the offices of most PARC researchers had been customized to their tastes. Richard Shoup’s Alto had a color display. Taylor’s Alto had a speaker—which played “The Eyes of Texas Are Upon You” whenever he received an electronic mail message.

And, as many people have found in the 10 years since the Alto became widespread at PARC, personal computers can be used for enjoyment as well as work. The PARC researchers were among the first to discover this.

“At night, whenever I was in Palo Alto,” Goldman said, “I’d go over to the laboratory and watch Alan Kay invent a game. This was long before electronic games, and these kids were inventing these things all the time until midnight, 1:00 a.m.”

“Xerox had the first electronic raffle nationwide. At Xerox, I received my first electronic junk mailing, first electronic job acceptance, and first electronic obituary.”

—Bert Sutherland

“l enjoyed observing a number of firsts,” Sutherland said. “Xerox had the first electronic raffle nationwide. At Xerox, I received my first electronic junk mailing, first electronic job acceptance, and first electronic obituary.”

When the Xerox 914 copiers came out in the early 1960s, “I was a copy freak,” said Lynn Conway who joined PARC from Memorex Corp. in 1973 and is now associate dean and professor of electrical engineering and computer science at the University of Michigan in Ann Arbor. “I liked to make things and give them out, like maps—all kinds of things. And in the Xerox environment in ’76, all of a sudden you could create things and make lots of them.”

Dozens of clubs and interest groups were started that met on the network. Whatever a PARC employee’s hobby or interest, he or she could find someone with whom to share that interest electronically. Much serious work got done electronically as well: reports, articles, sometimes entire design projects were done through the network.

One side effect of all this electronic communication was a disregard for appearances and other external trappings of status.

“People at PARC have a tendency to have very strong personalities, and sometimes in design sessions those personalities came over a little more strongly than the technical content,” said John Warnock, who joined PARC in 1978 from the Evans & Sutherland Corp., where he worked on high-speed graphics systems. Working via electronic mail eliminated the personality problems during design sessions. Electronic interaction was particularly useful for software researchers, who could send code back and forth.

Warnock, who is now president of Adobe Systems Inc., Palo Alto, Calif., described the design of lnterpress, a printing protocol: “One of the designers was in Pittsburgh, one of them was in Philadelphia, there were three of us in this area, and a couple in El Segundo [Calif.]. The design was done almost completely over the mail system, remotely; there were only two occasions when we all got together in the same room.”

Electronic mail was also invaluable for keeping track of group projects.

“One of the abilities that is really useful is to save a sequence of messages on a particular subject so that you can refer to it,” said Warren Teitelman, who joined PARC in 1972 from BBN Inc. and is currently manager of programming environments at Sun Microsystems in Mountain View. “Or if somebody comes into a discussion late and they don’t have the context, you can bring them up to date by sending them all the messages,” Teitelman added.

But electronic mail sometimes got out of hand at PARC. Once, after Teitelman had been out of touch for a week, he logged onto the sy

stem and found 600 messages in his mailbox.

Superpainting: The Story of Computer Paint Systems

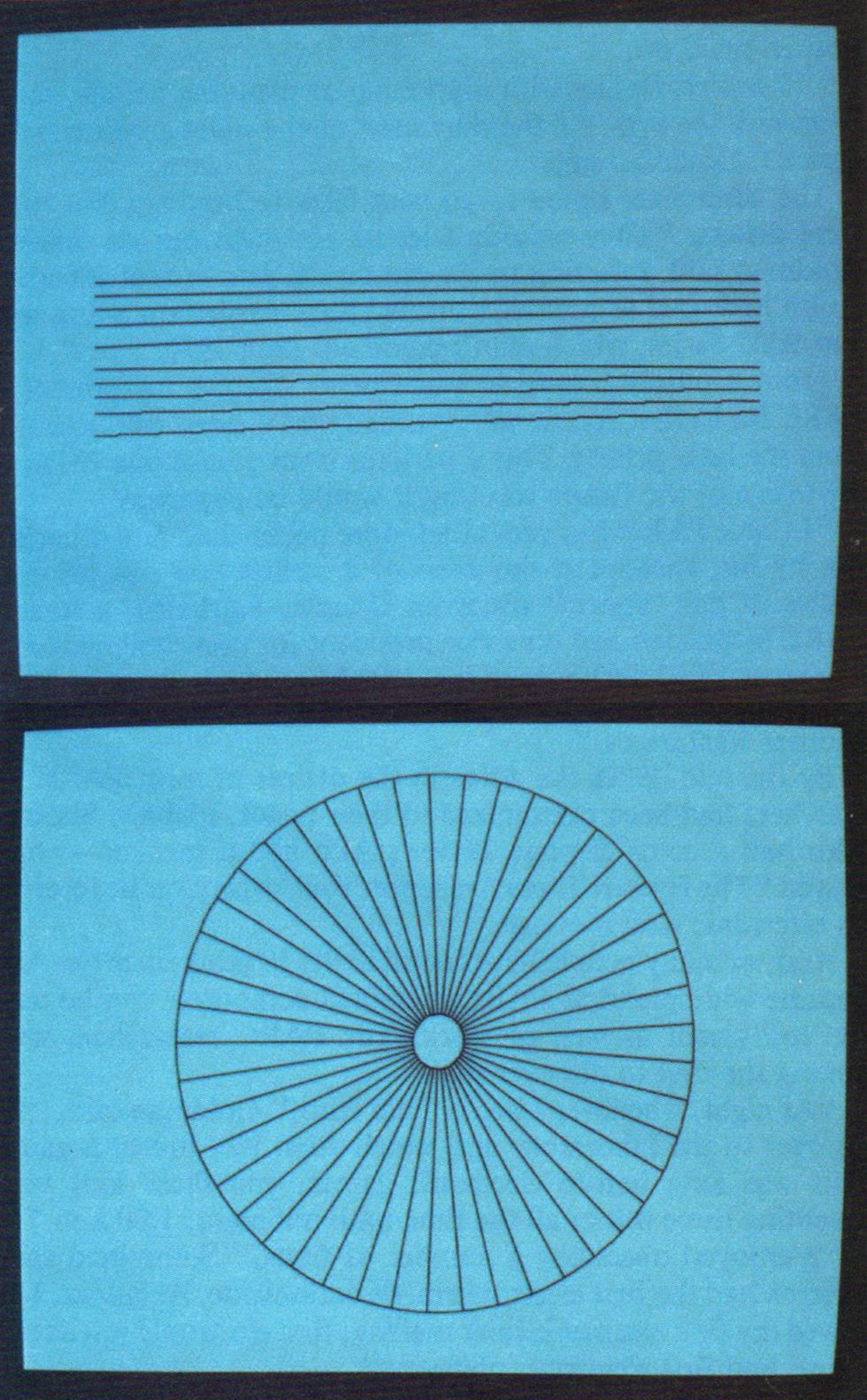

Antialiasing—removing jagged edges from diagonal lines and curves—is a standard technique in computer graphics today. These pictures, produced by Superpaint at PARC in 1972, were among the first demonstrations of antialiasing.

As anyone who has sat through a business meeting knows, the office of today includes graphics as well as text. In 1970, Shoup, who is now chairman of Aurora Systems Inc., started working at PARC on new ways to create and manipulate images digitally in the office of the future. His research started the field of television graphics and won Emmy awards for both him and Xerox.

“It quickly became clear that if we wanted to do a raster scan system, we ought to do it compatible with television standards so that we could easily obtain monitors and cameras and videotape recorders,” Shoup recalled. In early 1972 he built some simple hardware to generate antialiased lines, and by early 1973 the system, called Superpaint, was completed.

It was the first complete paint system with an 8-bit frame buffer anywhere, recalled Alvy Ray Smith, who worked with Superpaint at PARC and is soon to be vice president and chief technical officer of Pixar Inc., San Rafael, Calif.; it was also the first system to use several graphics aids: color lookup tables for simple animation, a digitizing tablet for input, a palette for mixing colors directly on the screen. The system also had a real-time video scanner so images of real objects could be digitized and then manipulated.

“The very first thing I did on the system was some antialiased lines and circles,” Shoup said, “because I’d written a paper on that subject and hadn’t finished the examples. But when I submitted the paper and had it accepted, the machine that was going to be used to do the examples wasn’t built yet.”

By mid-1974, Superpaint had been augmented by additional software that allowed it to perform all kinds of tricks, and Smith, who had just completed doctoral work in a branch of mathematics known as cellular automata theory, was hired to help put the machine through its paces. He used Superpaint to make a videotape called “Vidbits” that was later shown at the Museum of Modern Art in New York City. Six months later his initial contract with PARC expired and was not renewed. While disappointed, Smith was not surprised, as he had found that not everyone there shared his enthusiasm for painting with a computer.

“The color graphics lab was a long narrow room with seven doors into it,” he recalled. “You had to go through it to get to a lot of other places. Most people, when they walked through, would look at the screen and stop—even the most trite stuff had never been seen before. Cycling color maps had never been seen before. But there were some people who would go through and wouldn’t stop. I couldn’t figure out how people could walk through that room and never stop and look.”

A reason aside from others’ indifference to video graphics may have contributed to Smith’s departure. One of the first times Superpaint was seen by a wide audience was in a public television show, “Supervisions,” produced by station KCET in Los Angeles. “It was just used a couple of times for little color cycling effects,” Shoup recalled. But Xerox was not amused by the unauthorized use of the system in a program.

“Bob Taylor sat with Alvy [Smith] one entire afternoon while Alvy pushed the erase button on the videotape recorder, eliminating the Xerox logo from every copy of that tape,” Shoup continued. (This was one of the tapes viewed by the committee that awarded Xerox its Emmy.)

It was the first system to use…color lookup tables for simple animation, a digitizing tablet for input, [and] a palette for mixing colors directly on the screen.

Shoup stayed at PARC, supported by Kay’s research group, while Smith moved on, armed with a National Education Association grant to do computer art. He found support for his work at the New York Institute of Technology, where he helped develop Paint, which became the basis of Ampex Video Art (AVA), and N.Y. Tech’s Images, two graphics systems still in use today.

While Shoup was alone in pursuing Superpaint at PARC, Smith wasn’t the only Superpaint addict wandering the country in search of a frame buffer. David Miller, now known as David Em, and David Difrancesco were the first artists to paint with pixels. When Em lost access to Superpaint, he set out on a year-long quest for a frame buffer that finally brought him to the Jet Propulsion Laboratory in Pasadena, Calif.

Finally, in 1979, Shoup left PARC to start his own company to manufacture and market a paint system, the Aurora 100. He acknowledges that he made no technological leaps in designing the Aurora, which is simply a commercialized second-generation version of his first-generation system at PARC.

“The machine we’re building at Aurora for our next generation is directly related to things we were thinking about seven or eight years ago at PARC,” Shoup said.

The Aurora 100 is now used by corporations to develop in house training films and presentation graphics. Today, tens of thousands of artists are painting with pixels. The 1985 Siggraph art show in San Francisco alone received 4000 entries.

Of Mice and Modes: The Story of the Graphical User Interface

Most people who know that a mouse is a computer peripheral think it was invented by Apple. The cognoscenti will correct them by saying that it was developed at Xerox PARC.

But the mouse in fact preceded PARC. “I saw a demonstration of a mouse being used as a pointing device in 1966,” Tesler recalled. “Doug Engelbart [of SRI International Inc. in Menlo Park, Calif.] invented it.”

At PARC, Tesler set out to prove that the mouse was a bad idea. “I really didn’t believe in it,” he said. “I thought cursor keys were much better.

“We literally took people off the streets who had never seen a computer. In three or four minutes they were happily editing away, using the cursor keys. At that point I was going to show them the mouse and prove they could select text faster than with the cursor keys. Then I was going to show that they didn’t like it.

“It backfired. I would have them spend an hour working with the cursor keys, which got them really used to the keys. Then I would teach them about the mouse. They would say, ‘That’s interesting but I don’t think I need it.’ Then they would play with it a bit, and after two minutes they never touched the cursor keys again.”

“While I didn’t mind using a mouse for text manipulation, I thought it was totally inappropriate for drawing. People stopped drawing with rocks in Paleolithic times.”

—David Thornburg

After Tesler’s experiment, most PARC researchers accepted the mouse as a proper peripheral for the Alto. One holdout was Thornburg.

“I didn’t like the mouse,” he said. “It was the least reliable component of the Alto. I remember going into the repair room at PARC-where there was a shoebox to hold good mice and a 5O-gallon drum for bad mice. And it was expensive—too expensive for the mass market.

“While I didn’t mind using a mouse for text manipulation, I thought it was totally inappropriate for drawing. People stopped drawing with rocks in Paleolithic times, and there’s a reason for that: rocks aren’t appropriate drawing implements; people moved on to sticks.”

Thornburg, a metallurgist who had been doing materials research at PARC, began work on alternative pointing devices. He came up with a touch tablet in 1977 and attached it to an Alto. Most people who looked at it said, “That’s nice, but it’s not a mouse,” Thornburg recalls. His touch tablet did eventually find its way into a product: the Koalapad, a home-computer peripheral costing less than $100.

“It was clear that Xerox didn’t want to do anything with it,” Thornburg said. “They didn’t even file for patent protection, so I told them that I’d like to have it. After a lot of horsing around, they said OK.”

Thornburg left Xerox in 1981, worked at Atari for a while, then started a company—now Koala Technologies Inc.—with another ex-PARC employee to manufacture and market the Koalapad.

Meanwhile, though Tesler accepted the need for a mouse as a pointing device, he wasn’t satisfied with the way SRI’s mouse worked. “You had a five-key keyset for your left hand and a mouse with three buttons for your right hand. You would hit one or two keys with the left hand, then point at something with the mouse with the right hand, and then you had more buttons on the mouse for confirming your commands. It took six to eight keystrokes to do a command, but you could have both hands going at once. Experts could go very fast.”

The SRI system was heavily moded. In a system with modes, the user first indicates what he wants to do—delete, for example. This puts the system in the delete mode. The computer then waits for the user to indicate what he wants deleted. If the user changes his mind and tries to do something else, he can’t unless he first cancels the delete command.

In a modeless system, the user first points to the part of the display he wants to change, then indicates what should be done to it. He can point at things all day, constantly changing his mind, and never have to follow up with a command.

To make things even more complicated for the average user (but more efficient for programmers), the meaning of each key varied, depending on the mode the system was in. For example, “J” meant scroll and “I” meant insert. If the user tried to “insert,” then to “scroll” without canceling the first command, he would end up inserting the letter “J” in the text.

Larry Tesler set out to test the interface on a nonprogrammer…. Apparently nobody had done that before.

Most programmers at PARC liked the SRI system and began adapting it in their projects. “There was a lot of religion around that this was the perfect user interface,” said Tesler. “Anytime anybody would suggest changing it, they were greeted with glares.”

Being programmers, they had no trouble with the fact that the keypad responded to combinations of keys pressed simultaneously that represented the alphabet in binary notation. Tesler set out to test the interface on a nonprogrammer. He taught a newly hired secretary how to work the machine and observed her learning process. “Apparently nobody had done that before,” he said. “She had a lot of trouble with the mouse and the keyset.”

Tesler argued for a simpler user interface. “Just about the only person who agreed with me was Alan Kay,” he said. Kay supported Tesler’s attempt to write a modeless text editor on the Alto.

Although most popular computers today use modeless software, with the Macintosh being probably the best example, Tesler’s experiments didn’t settle the issue.

“MacWrite, Microsoft Word, and the Xerox Star all started out as projects that were heavily moded,” Tesler said, “because programmers couldn’t believe that a user interface could be flexible and useful and extensible unless it had a lot of modes. The proof that this wasn’t so didn’t come by persuasion, it came through customers complaining that they liked a dinky modeless editor with no features better than the one that had all the features they couldn’t figure out how to use.”

Kids and Us: The Story of Smalltalk

The same kinds of simplification that made for the modeless editor were also applied to programming languages and environments at PARC. Seeking a language that children could use, Kay could regularly be seen testing his work with kindergarten and elementary-school pupils.

What Kay aimed for was the Dynabook: a simple, portable personal computer that would cater to a person’s information needs and provide an outlet for creativity-writing, drawing, and music composition. Smalltalk was to be the language of the Dynabook. It was based on the concepts of classes pioneered in the programming language Simula, and on the idea of interacting objects communicating by means of messages requesting actions, rather than by programs performing operations directly on data. The first version of Smalltalk was written as the result of a chance conversation between Kay, Ingalls, and Ted Kaehler, another PARC researcher. Ingalls and Kaehler were thinking about writing a language, and Kay said, “You can do one on just one page.”

What Kay aimed for was the Dynabook: a simple, portable personal com

puter.

He explained, “If you look at a Lisp interpreter written in itself, the kernel of these things is incredibly small. Smalltalk could be even smaller than Lisp.”

The problem with this approach, Kay recalled, is that “Smalltalk is doubly recursive: you’re in the function before you ever do anything with the arguments.” In Smalltalk-72, the first version of the language, control was passed to the object as soon as possible. Thus writing a concise definition of Smalltalk-in Small talk-was very difficult.

“It took about two weeks to write 10 lines of code,” Kay said, “and it was very hard to see whether those 10 lines of code would work.”

Kay spent the two weeks thinking from 4:00 to 8:00 a.m. each day and then discussing his ideas with Ingalls. When Kay was done, Ingalls coded the first Smalltalk in Basic on the Nova 800, because that was the only language available at the time with decent debugging facilities.

“Smalltalk was of a scale that you could go out and have a pitcher of beer or two and come back, and then two people would egg each other on and do an entire system in an afternoon.”

—Alan Kay

Because the language was so small and simple, developing programs and even entire systems was also quite fast. “Smalltalk was of a scale that you could go out and have a pitcher of beer or two and come back, and then two people would egg each other on and do an entire system in an afternoon,” Kay said. From one of those afternoon sessions came overlapping windows.

The concept of windows had originated in Sketchpad, an interactive graphics program developed by Ivan Sutherland at MIT in the early 1960s; the Evans & Sutherland Corp. had implemented multiple windows on a graphics machine in the mid-1960s. But the first multiple overlapping windows were implemented on the Alto by PARC’s Diana Merry in 1973.

“All of us thought that the Alto display was incredibly small,” said Kay, “and it’s clear that you’ve got to have overlapping windows if you don’t have a large display.”

After windows came the concept of Bitblt—block transfers of data from one portion of memory to another, with no restrictions about alignment on word boundaries. Thacker, the main designer of the Alto computer, had implemented a function called CharacterOp to write characters to the Alto’s bit-mapped screen, and Ingalls extended that work to make a general graphic utility. Bitblt made overlapping windows much simpler, and it also made possible all kinds of graphics and animation tricks.

“I gave a demo in early 1975 to all of PARC of the Smalltalk system using Bitblt for menus and overlapping windows and things,” Ingalls recalled. “A bunch of people came to me afterwards, saying ‘How do you do all these things? Can I get the code for Bitblt?’ and within two months those things were being used throughout PARC.”

Flashy and impressive as it was, Smalltalk-72 “was a dead end,” Tesler said. “It was ambiguous. You could read a piece of code and not be able to tell which were the nouns and which were the verbs. You couldn’t make it fast, and it couldn’t be compiled.”

The first compiled version of Smalltalk, written in 1976, marked the end of the emphasis on a language that children could use. The language was now “a mature programming environment,” Ingalls said. “We got interested in exporting it and making it widely available.”

“It’s terrible that Smalltalk-80 can’t be used by children, since that’s who Smalltalk was intended for. It fell back into data-structure-type programming instead of simulation-type programming.”

—Alan Kay

The next major revision of Smalltalk was Smalltalk-80. Kay was no longer on the scene to argue that any language should be simple enough for a child to use. Smalltalk-80, says Tesler, went too far in the opposite direction from the earliest versions of Smalltalk: “It went to such an extreme to make it compilable, uniform, and readable, that it actually became hard to read, and you definitely wouldn’t want to teach it to children.”

Kay, looking at Smalltalk-80, said, “It’s terrible that it can’t be used by children, since that’s who Smalltalk was intended for. It fell back into data-structure-type programming instead of simulation-type programming.”

While Kay’s group was developing a language for children of all ages, a group of artificial-intelligence researchers within PARC were improving Lisp. Lisp was brought to PARC by Warren Teitelman and Daniel G. Bobrow from Bolt, Beranek, and Newman in Cambridge, Mass., where it was being developed as a service to the ARPA community. At PARC, it was renamed Interlisp, a window system called VLISP was added, and a powerful set of programmers’ tools was developed.

In PARC’s Computer Science Laboratory, researchers were developing a powerful language for systems programming. After going through several iterations, the language emerged as Mesa—a modular language, which allowed several programmers to work on a large project at the same time. The key to this is the concept of an interface—what a module in a program does, rather than how it does it. Each programmer knows what the other modules are chartered to do and can call on them to perform their particular functions.

Another dominant feature was Mesa’s strong type-checking, which prevented programmers from using integer variables where they needed real numbers, or real numbers where they needed character strings—and prevented bugs from spreading from one module of a program to another.

These concepts have since been widely adopted as the basis of modular programming languages. “A lot of the ideas in Ada [the standard programming language of the U.S. Department of Defense] and Modula-2 came out of the programming language research done at PARC,” said Chuck Geschke, now executive vice president of Adobe Systems Inc. Modula-2, in fact, was written by computer scientist Niklaus Wirth after he spent a sabbatical at PARC.

Nobody’s Perfect: Xerox PARC’s Failures

While PARC may have had more than its share of successes, like any organization it couldn’t escape some failures. The one most frequently cited by former PARC researchers is Polos.

Polos was an alternate approach to distributed computing. While Thacker and McCreight were designing the Alto, another group at PARC was working with a cluster of 12 Data General Novas, attempting to distribute functions among the machines so that one machine would handle editing, one would handle input and output, another would handle filing.

“With Altos,” Sutherland said, “everything each person needed was put in each machine on a small scale. Polos was an attempt to slice the pie in a different way-to split up offices functionally.”

By the time Polos was working, the Alto computers were proliferating throughout PARC, so Polos was shut down. But it had an afterlife: Sutherland distributed the 12 Novas among other Xerox divisions, where they served as the first remote gateways onto PARC’s Alto network, and the Polos displays were used as terminals within PARC until they were junked in 1977. Another major PARC project that failed was a combination optical character reader and facsimile machine. The idea was to develop a system that could take printed pages of mixed text and graphics, recognize the text as such and transmit the characters in their ASCII code, then send the rest of the material using the less-efficient facsimile coding method.

“It was fabulously complicated and fairly crazy,” said Charles Simonyi, now manager of application development at Microsoft Corp. “On this project they had this inc

redible piece of hardware that was the equivalent of a 10,000-line Fortran program.” Unfortunately, the equivalent of tens of thousands of lines of Fortran in those days meant tens of thousands of individual integrated circuits.

“While we made substantial progress at the algorithmic and architecture level,” said Conway, who worked on the OCR project, “it became clear that with the circuit technology at that time it wouldn’t be anywhere near an economically viable thing.” The project was dropped in 1975.

Turning Research Into Products (or Not)

Essentially, the PARC researchers worked in an ivory tower for the first five years; while projects were in their infancy, there was little time for much else. But by 1976, with an Alto on every desk and electronic mail a way of life at the center, re searchers yearned to see their creations used by friends and neighbors.

At that point, Kay recalled, about 200 Altos were in use at PARC and other Xerox divisions; PARC proposed that Xerox market a mass-production version of the Alto: the Alto III.

“On Aug. 18, 1976, Xerox turned down the Alto III,” Kay said.

So the researchers, rather than turning their project over to a manufacturing division, continued working with the Alto.

“That was the reason for our downfall,” said Kay. “We didn’t get rid of the Altos. Xerox management had been told early on that Altos at PARC were like Kleenex; they would be used up in three years and we would need a new set of things 10 times faster. But when this fateful period came along, there was no capital.

“We had a meeting at Pajaro Dunes [Calif.] called ‘Let’s burn our disk packs.’ We could sense the second derivative of progress going negative for us,” Kay related. “I really should have gone and grenaded everybody’s disks.”

Instead of starting entirely new research thrusts, the PARC employees focused on getting the fruits of their past research projects out the door as products.

Every few years the Xerox Corp. has a meeting of all its managers from divisions around the world to discuss where the company may be going. At the 1977 meeting, held in Boca Raton, Fla., the big event was a demonstration by PARC researchers of the systems they had built.

The PARC workers assigned to the Boca Raton presentation put their hearts, souls, and many Xerox dollars into the effort. Sets were designed and built, rehearsals were held on a Holly wood sound stage, and Altos and Dovers were shipped between Hollywood and Palo Alto with abandon. It took an entire day to set up the exhibit in an auditorium in Boca Raton, and a special air-conditioning truck had to be rented from the local airport to keep the machines cool. But for much of the Xerox corporate staff, this was the first encounter with the “eggheads” from PARC.

“PARC was a very strange place to the rest of the company… It was thought of as weird computer people who had beards, who didn’t bathe or wear shoes, who spent long hours deep into the night staring at their terminals…and who basically were antisocial eggheads. Frankly, some of us fed that impression.”

—Richard Shoup

“PARC was a very strange place to the rest of the company,” Shoup said. “It was not only California, but it was nerds. It was thought of as weird computer people who had beards, who didn’t bathe or wear shoes, who spent long hours deep into the night staring at their terminals, who had no relationships with any other human beings, and who basically were antisocial eggheads. Frankly, some of us fed that impression, as if we were above the rest of the company.”

There was some difficulty in getting the rest of Xerox to take PARC researchers and their work seriously.

“The presentation went over very well, and the battle was won, but the patient died,” Goldman said. Not only had Xerox executives seen the Alto, the Ethernet, and the laser printer, they had even been shown a Japanese-language word processor. “But the company couldn’t bring them to market!” Goldman said. (By 1983, the company did market a Japanese version of its Star computer.)

One reason that Xerox had such trouble bringing PARC’s advances to market was that, until 1976, there was no development organization to take research prototypes from PARC and turn them into products. “At the beginning, the way in which the technology would be transferred was not explicit,” Teitelman said. “We took something of a detached view and assumed that someone was going to pick it up. It wasn’t until later on that this issue got really focused.”

Reaching Anew: The Story of the First Portable Computer

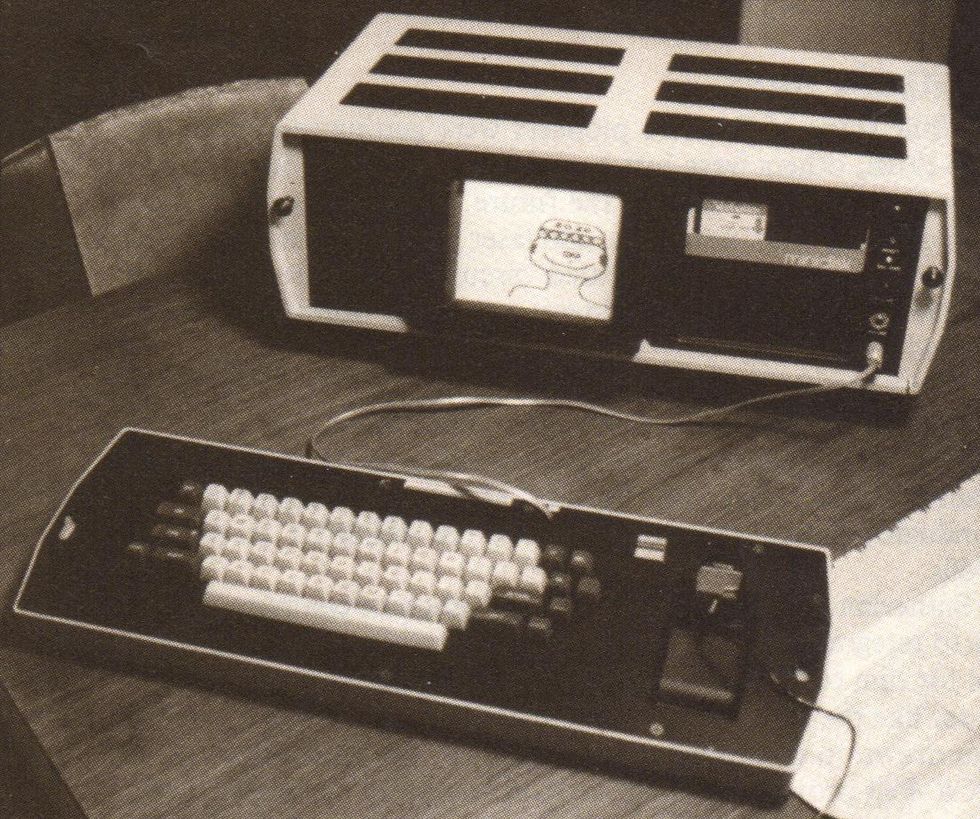

The Notetaker, a portable personal computer built at PARC in 1978, is rumored to have been the inspiration for the Osborne I.

Even with a development organization, it was an uphill battle to get Xerox executives to accept a product. One example was the Notetaker computer, conceived by Adele Goldberg, a researcher in the Smalltalk group who is currently president of the Association for Computing Machinery and who is still at PARC. “Poor Adele,” Tesler said. “The rest of us got involved and kept redefining the project.”

The Notetaker ended up as an 8086-based computer that could fit under an airplane seat. It was battery-powered, ran Smalltalk, and had a touch-sensitive screen designed by Thornburg. “We had a custom monitor, we had error-corrected memory, a lot of custom engineering that we would normally only do for a real product,” said Fairbairn, the Notetaker’s chief hardware designer. “The last year before I left PARC,” Tesler said, “I spent flying around the country talking to Xerox executives, carrying Notetaker with me. It was the first portable computer run in an airport. Xerox executives made all sorts of promises: we’ll buy 20,000, just talk to this executive in Virginia, then talk to this executive in Connecticut. The company was so spread out, they never got the meeting together. After a year I was ready to give up.”

While Xerox may not have been ready to run with a portable computer, others were. The Osborne I was introduced in 1981, about nine months after Adam Osborne reportedly toured PARC, where pictures of the Notetaker were prominently displayed.

Using the Tools: The Story of Mead-Conway VLSI Design

While some of PARC’s pioneers were getting restless by the mid-1970s, others were just beginning to find uses for the marvelous tools of the office of the future. One was Lynn Conway, who used the Alto, networks, and laser printers to develop a new method of designing integrated circuits and disse

minate the method to hundreds of engineers at several dozen institutions around the country.

When Bert Sutherland came in as manager of the Systems Science Laboratory in 1975, he brought Carver Mead, a professor at the California Institute of Technology in Pasadena, to PARC “to wander in and create some havoc.” Mead was an expert in semiconductor design who had invented the MESFET in the late 1960s.

Sutherland had worked on the application of computer graphics to integrated-circuit layout, Conway recalled, so it was natural for him to think about applying an advanced personal computer like the Alto to the problem of IC design. Conway herself was drawn to integrated-circuit design by the frustration of the OCR-Fax project, in which she had conceived an elegant architecture that could only be realized as racks and racks of equipment. But those racks might become a few chips if only they could be designed by someone who knew what they should do and how they should fit together.

“Carver Mead came up and gave a one-week course at PARC on integrated-circuit design,” Fairbairn recalled. “Lynn Conway and I were the ones that really got excited about it and really wanted to do something.”

“Then a whole bunch of things really clicked,” said Conway. “While Carver and I were cross-educating each other on what was going on in computing and in devices, he was able to explain some of the basic MOS design methods that had been evolving within Intel. And we began to see ways to generalize the structures that [those designers] had generated.” Instead of working only on computer tools for design, Conway explained, she and Mead worked to make the design methods simpler and to build tools for the refined methods.

“Between mid-’75 and mid-’77, things went from a fragmentary little thing—one of a number of projects Bert wanted to get going—to the point where we had it all in hand, with examples, and it was time to write.”

In a little less than two years, Carver Mead and Lynn Conway had developed the concepts of scalable design rules, repetitive structures, and the rest of what is now known as structured VLSI design

In a little less than two years, Mead and Conway had developed the concepts of scalable design rules, repetitive structures, and the rest of what is now known as structured VLSI design—to the point where they could teach it in a single semester.

Today structured VLSI design is taught at more than 100 universities, and thousands of different chips have been built with it. But in the summer of 1977, the Mead-Conway technique was untested—in fact belittled. How could they get it accepted?

“The amazing thing about the PARC environment in 1976-77 was the feeling of power; all of a sudden you could create things and make lots of them. Not just one sheet, but whole books,” said Conway.

And that is exactly what she and her cohorts did. “We just self-published the thing [Introduction to VLSI Systems],” said Conway, “and put it in a form that if you didn’t look twice, you might think this was a completely sound, proven thing.”

It looked like a book, and Addison-Wesley agreed to publish it as a book. Conway insisted it couldn’t have happened without the Altos. “Knowledge would have gotten out in bits and pieces, always muddied and clouded-we couldn’t have generated such a pure form and generated it so quickly.”

The one tool Conway used most in the final stages of the VLSI project was networks: not only the Ethernet within PARC, but the ARPAnet that connected PARC to dozens of research sites across the country. “The one thing I am clear of in retrospect,” said Conway, “is the sense of having powerful invisible weapons that people couldn’t understand we had. The environment at PARC gave us the power to outfox and outmaneuver people who would think we were crazy or try to stop us; otherwise we would never have had the nerve to go out with it the way we did.”

Fire-Breathing Dragon: The Story of the Dorado Computer

In 1979, three years after Alan Kay had wanted to throw away the Altos “like Kleenex,” the Dorado, a machine 10 times more powerful, finally saw the light of day.

“It was supposed to be built by one of the development organizations because they were going to use it in some of their products,” recalled Severo Ornstein, one of the designers of the Dorado and now chairman of Computer Professionals for Social Responsibility in Palo Alto. “But they decided not to do that, so if our lab was going to have it, we were going to have to build it ourselves. We went through a long agonizing period in which none of us who were going to have to do the work really wanted to do it.”

“Taylor was running the lab by that time,” Ornstein said. “The whole thing was handled extremely dexterously. He never twisted anyone’s arm really directly; he presided over it and kept order in the process, but he really allowed the lab to figure out that that was what it had to do. It was really a good thing, too, because it was very hard to bring the Dorado to life. A lot of blood was shed.”

At first, Ornstein recalled, the designers made a false start by using a new circuit-board technology—so-called multiwire technology, in which individual wires are bonded to a board to make connections. But the Dorado boards were too complex for multiwire technology. When the first Dorado ran, there was a question in many people’s minds whether there would ever be a second.

“There Butler Lampson’s faith was important,” Ornstein said. “He was the only one who believed that it could be produced in quantity.

In fact, even after the Dorado was redesigned using printed-circuit boards instead of multiwire and Dorados began to be built in quantity, they were still rare. “We never had enough budget to populate the whole community with Dorados,” recalled one former PARC manager. “They dribbled out each year, so that in 1984 still not everybody had a Dorado.”

Those who did were envied. “I had a Dorado of my very own,” said John Warnock. “Chuck Geschke was a manager; he didn’t get one.”

“In the early days…I got to take my Alto home. But the evolution of machines at Xerox went in the opposite direction from making it easy to take the stuff home.”

—Dan Ingalls

“I got a crusty old Alto and a sheet of paper,” Geschke said. The advent of the Dorado allowed researchers whose projects were too big for the Alto to make use of bit-mapped displays and all the other advantages of personal computers. “We had tried to put Lisp on the Alto, and it was a disaster,” recalled Teitelman. “When we got the Dorado, we spent eight or nine months dis cussing what we would want to see in a programming environment that would combine the best of Mesa, Lisp, and Small talk.” The result was Cedar, now commonly acknowledged to be one of the best programming environments anywhere.

“Cedar put some of the good features of Lisp into Mesa, like garbage collection and run-time type-checking,” said Mitchell of Acorn. Garbage collection is a process by which memory space that is no longer being used by a program can be reclaimed; run time type-checking allows a program to determine the types of its arguments—whether integers, character strings, or floating-point numbers—and choose the operations it performs on them accordingly.

Interlisp, the language Teitelman had nurtured for 15 years, also was transported to the Dorado, where it was the basis for a research effort that has now grown into the Intelligent Systems Laboratory at PARC.

PARC’s Smalltalk group, who had gotten used to their Altos

and then built the Notetaker, another small computer, had some trouble dealing with the Dorados.

“In the early days, we had Smalltalk running on an Alto, and I got to take my Alto home,” recalled Ingalls. “But the evolution of machines at Xerox went in the opposite direction from making it easy to take the stuff home. The next machine, the Dolphin, was less transportable, and the Dorado is out of the question—it’s a fire-breathing dragon.”

New Horizons: The PARC Team Scatters

The Dorado was the last major project to be completed by PARC in the 1970s—and the last one nurtured by many of the researchers who had made PARC famous and who in tum had been made famous by the work they did at PARC. For these researchers, it was time to move on.

Alan Kay took a sabbatical beginning in March 1980 and never returned to PARC. Doug Fairbairn, Larry Tesler, and John Ellenby also left that year. In 1981 the exodus continued, with researchers including David Thornburg, Charles Simonyi, and Bert Sutherland packing their knapsacks. By June of 1984, John Warnock, Chuck Geschke, Lynn Conway, Dan Ingalls, Warren Teitelman, and Jim Mitchell had moved on. Bob Taylor had also left, taking a group of researchers with him that included Chuck Thacker and Butler Lampson.

Why the sudden rush for the doors?

There are probably as many reasons as there are people who left PARC. But several common threads emerge—natural career progression, frustration, the playing-out of PARC’s original charter, and a feeling among those who departed that it was time to make room for new blood. PARC hired many of its earliest employees right out of graduate school; they were roughly the same age as one another, and their careers matured along with PARC.

“If you look at a championship football or basketball team,” said Teitelman, “they have somebody sitting on the bench who could start on another team. Those people usually ask to be traded.”

“I saw personal computers happening without us. Xerox no longer seemed like where it was going to happen.”

—Larry Tesler

But some of those who left PARC recalled that a disillusionment had set in. They hadn’t been frustrated with the progression of their careers; rather, they had been frustrated with the rate of progression of their products into the real world.

“We really wanted to have an impact on the world,” Mitchell said. “That was one reason we built things, that we made real things; we wanted to have a chance of making an impact.”

And the world was finally ready for the PARC researchers, who until the late 1970s had few other places to go to continue the projects they were interested in. But by the early 1980s, other companies were making similar research investments-and bringing the products of that research to the commercial marketplace.

“We got very frustrated by seeing things like the Lisa come out,” said Mitchell, “when there were better research prototypes of such systems inside PARC.”

“I saw personal computers happening without us,” said Tesler. “Xerox no longer seemed like where it was going to happen.” Tesler recalls trying to disabuse his colleagues of the notion that only PARC could build personal computers, after he met some Apple engineers.

“Bob Taylor was the guy that kept insisting, ‘We have all the smart people.’ I told him, ‘There are other smart people. There are some at Apple, and I’ll bet there are some at other places, too.’ ”

“‘Hire them,’ he said. I said, ‘We can’t get them all-there are hundreds of them out there, they are all over the place!’ At that moment I decided to leave.”

The exodus may have begun in 1980 also because it signified a new decade. Ten years were over, and the researchers had done what they felt they had signed on to do. But, some felt, Xerox had not kept up its end of the bargain-to take their research and develop it into the “office of the future.”

Some look unkindly on this “failure” of Xerox’s. Others are more philosophical.

“One of the worst things that Xerox ever did was to describe something as the office of the future, because if something is the office of the future, you never finish it,” Thornburg said. “There’s never anything to ship, because once it works, it’s the office of today. And who wants to work in the office of today?” The departures may have proved beneficial for PARC’s long term growth. Because few researchers left during the 1970s, there was not a great deal of room for hiring new people with new ideas.

“There is something about high technology, an excitement about being right out at the absolute edge and shoving as hard as we can because we can see where the digital revolution is going to go. I can’t imagine it not being exciting somewhere.”

—Alvy Ray Smith

“No biological organism can live in its own waste products,” Kay said. “If you have a closed system, it doesn’t matter how smart a being you have in there, it will eventually suffocate.”

The exodus not only made room for new blood and new ideas within PARC but also turned out to be an efficient method of transferring PARC’s ideas to the outside world, where they have rapidly turned into products.

Meanwhile, back at the lab, new research visions for PARC’s second decade have been seeded. Early efforts in VLSI have expanded, for example, to encompass a full range of fabrication and design facilities. William Spencer, now director of PARC, was the Integrated Circuits Laboratory’s first manager. The laboratory now does experimental fabrication for other areas of PARC and Xerox and is building the processor chips for the Dragon, PARC’s newest personal computer. Collaboration with several universities has led to a kit for integrating new chips into working computer systems.

PARC has also found additional ways of getting products on the market: researchers in the General Science Laboratory in 1984 founded a new company, Spectra Diode Laboratories, with Xerox and Spectra-Physics Inc. funding, to commercialize PARC research on semiconductor lasers.

Perhaps the strongest push in progress at PARC is in artificial intelligence, where the company is marketing Dandelion and Dorado computers that run Interlisp, along with PARC-developed AI tools, including Loops, a software system that lets knowledge-engineers combine rule-based expert systems with object-oriented programming and other useful styles of knowledge representation. Loops, which was developed by three PARC researchers—formed AI Systems Business Unit, a marketing and development organization at PARC.

PARC’s scattered AI groups have been consolidated into the Intelligent Systems Laboratory, which is doing research into qualitative reasoning, knowledge representation, and other topics. One interesting outgrowth of the early “office of the future” research is the Co-Lab, an experimental conference room that uses projection screens, the Ethernet, and half a dozen Dorados to help people work together and make decisions about complex projects.

The next decade of advances in computer science may come from PARC—from “my grown-up baby,” as Goldman puts it. Or they may come from somewhere else. But the “architects of information” who made PARC famous have no doubt that they will come.

“There is something about high technology, an excitement about being right out at the absolute edge and shoving as hard as we can because we can see where the digital revolution is going to go,” said Pixar’s Smith. “It has got to happen. I can’t imagine it not being exciting somewhere.”

From Your Site Articles

Related Articles Around the Web

More Stories

Essential Things To Include In Your Skincare Routine

4 charts that show just how big abortion won in Kansas

Here Are The Best 15 Apps For Learning Science